How to Deploy a Sovereign AI Agent: Architecture and Implementation of Clawdbot

The digital landscape is shifting from passive chatbots to active AI agents. Clawdbot represents an architectural evolution, moving intelligence from centralized cloud services to the user's local infrastructure. Unlike traditional conversational models that forget context after a session, this self-hosted autonomous runtime is designed for persistence and system-level control. This guide provides a technical analysis of its architecture, deployment strategies, and operational methods for integrating a truly sovereign AI assistant into complex digital workflows.

Sections

Understanding the Sovereign Agent Paradigm

To maximize the utility of advanced machine intelligence, it is critical to distinguish between a standard chatbot and an active agent. A chatbot is fundamentally a text-generation engine that receives input and produces output, often forgetting the conversation context upon session closure. An agent, conversely, possesses agency—the capacity to act.

Agent vs. Chatbot Capability

Clawdbot bridges the cognitive power of Large Language Models (LLMs) with functional capabilities. It is not merely a conversational partner; it is an operator that can execute terminal commands, manipulate the filesystem, browse the live web, and interact with the user through messaging platforms such as WhatsApp, Telegram, and Discord. This transition allows the agent to move beyond consultation and actively manage tasks.

The Local-First Architecture

Clawdbot is a self-hosted system that runs on user infrastructure, whether a local Macintosh or a dedicated Virtual Private Server (VPS). This local-first design ensures that the AI functions as a secure extension of the user's operating system, maintaining data sovereignty and enabling deep integration with local files and applications.

Architectural Fundamentals and Memory Management

The system's architecture relies on modularity and connectivity, functioning as a central orchestration layer that facilitates autonomous action.

The Node.js Gateway Model

The core of the system is a Node.js daemon that acts as the Gateway. This central component manages persistent connections to various messaging Transports. When a message arrives, the Gateway normalizes the data, routes it to the AI agent runtime, and manages the execution of commands on the host operating system, securely returning the results back to the user.

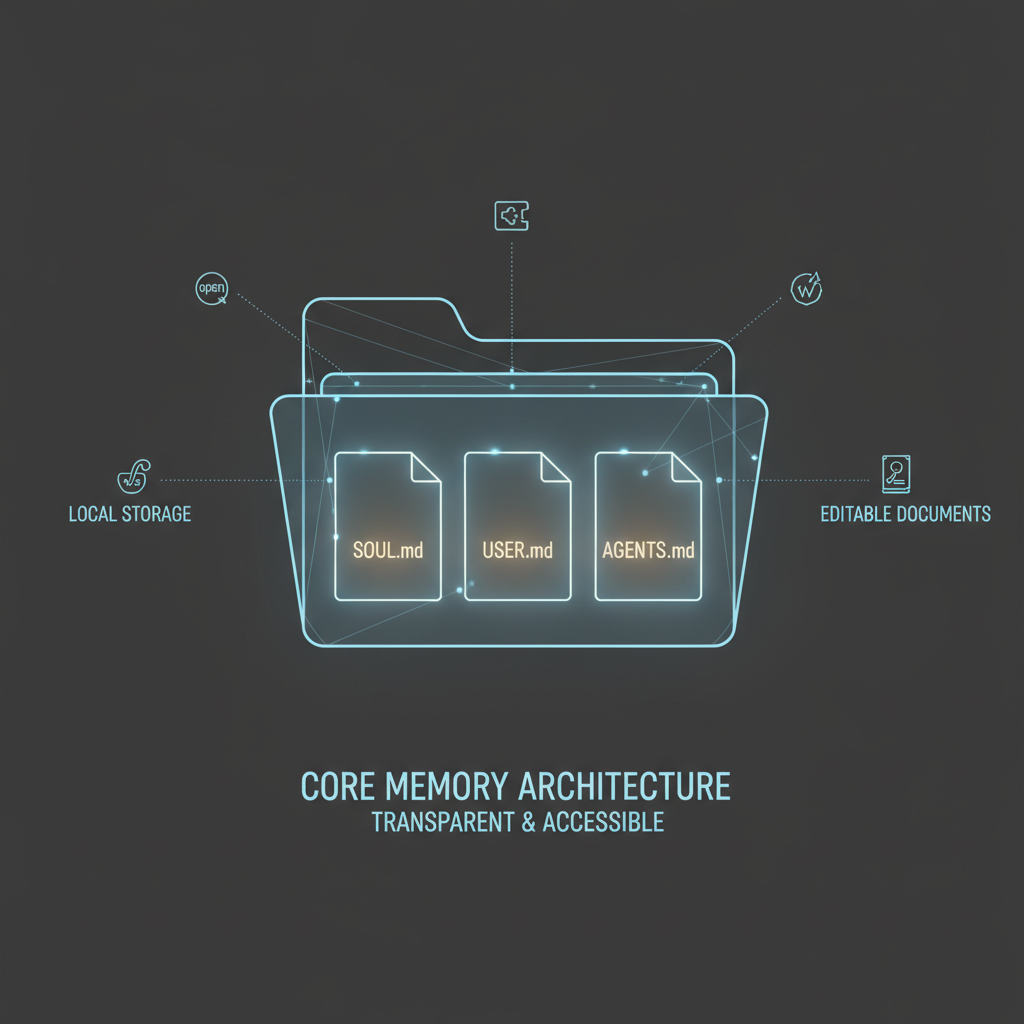

Filesystem as Memory (The Agent's Brain)

In a departure from proprietary databases, Clawdbot employs the filesystem itself as its persistent memory store. The agent’s core context is stored in simple, editable Markdown files within a designated workspace directory (typically ~/clawd). This approach provides unparalleled transparency and portability.

Critical configuration files include:

- SOUL.md: Defines the agent's persona, ethical boundaries, and desired tone.

- USER.md: Stores long-term biographical details, user preferences, and facts learned over time.

- AGENTS.md: Contains operational instructions and context for ongoing tasks.

- TOOLS.md: A user-maintained manual detailing how the agent should utilize its specific installed skills.

Infrastructure and Deployment Strategy

Deploying a sovereign agent is a systems integration task requiring specific infrastructure choices.

Choosing the Runtime Environment

The operational environment must be selected based on required availability and access:

- Local Macintosh: Provides direct access to local files and desktop environments. This option is suitable for desktop assistants but requires the machine to remain active to function.

- Dedicated VPS (Always-On): For a robust, production-grade assistant, a Linux VPS is recommended. Since the heavy computational lifting (inference) is offloaded to API providers (e.g., Anthropic), a minimal $5/month VPS is typically sufficient to run the Node.js runtime 24/7. Dockerization is available to simplify setup and enhance security.

Prerequisite Configuration

The host environment must be configured with a specific runtime (Node.js version 22 or higher). Installation often involves using package managers like pnpm and configuring system permissions, such as granting Full Disk Access on macOS, to enable the process to interact with local files and databases (like iMessage).

Achieving Agency: Tools, Skills, and Automation

Agency is realized through a suite of native tools that allow the LLM to affect change in the user's environment.

Shell Access (The Terminal Interface)

The exec tool grants the Agent direct access to the system shell (Bash/Zsh). This is the most powerful capability, allowing the Agent to manage files, install software, run custom scripts, and interact with operating system binaries. Due to the inherent risk, this feature requires strict security governance and careful sandboxing.

Browser Automation and Web Interaction

The built-in browser tool launches a headless instance of Chromium. This enables the Agent to perform actions impossible for standard API models, such as navigating complex websites, filling out login forms, scraping real-time data from sites without APIs, and interacting with specific web elements.

Extensibility through Skills

The core functionality is infinitely extensible via the Skills system, managed through the centralized community registry (ClawdHub). Skills are modular packages that interface with third-party services. Examples include:

- Productivity: Integrating with Google Calendar to manage schedules.

- Communication: Using the Telegram or WhatsApp Transports.

- Media: Utilizing ElevenLabs API for text-to-speech voice replies.

Cognitive Tuning and Chain of Thought

The depth of the agent’s internal reasoning can be manually configured using the /think command. Setting the thinking mode to “high” triggers the Agent to generate an extensive, hidden internal monologue—a Chain of Thought (CoT)—before executing a tool or sending a reply. While increasing latency and token consumption, this significantly improves the success rate of complex, multi-step autonomous tasks.

Practical Workflows and Operational Excellence

Clawdbot is designed to automate complex processes that require persistent context and interaction with multiple tools.

Advanced Research and Synthesis

The agent excels at processing external information. A user can provide a link to a lengthy article and instruct the agent to analyze it based on saved context (e.g., 'Summarize this investment thesis'). The agent reads the text, filters for relevance, and saves the detailed summary, thesis, and links directly into the user’s organized memory files, bypassing the need for manual knowledge management systems.

Automated Task and Project Management

The agent can function as an integrated project manager. It can process voice notes or chat messages, transcribe them, understand the intent, and then take action—setting a calendar reminder, creating a task, or sending a directive to a team member in a group chat. The ability to manage tasks and integrate with scheduling tools proactively shifts the interaction model from passive request-response to active push notifications.

Autonomous System Improvement (Self-Healing)

Leveraging its shell access, the agent can be tasked with self-improvement. For example, a user can ask the agent to acquire a new capability (e.g., QR code generation). The agent will autonomously search for the required library, use the exec tool to install it, write the necessary software wrapper, and update its own internal documentation (TOOLS.md), permanently upgrading its skill set without human intervention.

Security, Maintenance, and Token Costs

Running a sovereign agent necessitates managing operational overhead not present in SaaS environments.

Security and Access Control

Granting an AI agent shell access requires stringent security policies. Clawdbot utilizes a strict 'Allowlist' model, requiring explicit listing of allowed user identifiers (e.g., phone numbers or usernames) in the configuration file to prevent unauthorized commanding. Operational best practice is to run the agent daemon as a dedicated, non-root user to enforce sandboxing and limit destructive potential.

Managing Cognitive Resources and Costs

While infrastructure costs are minimal, the continuous usage of high-capability LLMs (like Claude Opus) can lead to significant token consumption, especially when running in 24/7 or 'high-thinking' mode. Users must implement budget caps and monitor API usage closely. For maintenance, the /reset or /new command clears the short-term context (RAM) while preserving the long-term memory (on disk), effectively refreshing the agent's session without losing learned data.

Common Mistakes to Avoid

- Failing to Sandbox: Running the agent as a root or administrative user exposes the entire system to accidental or malicious commands executed by the AI. Always use a dedicated, low-privilege user account.

- Ignoring Security Allowlists: Failing to configure the

allowFromsettings means the agent may accept commands from any contact on a connected messaging platform, leading to unauthorized actions and high token spend. - Overlooking API Costs: Assuming the system is purely local. The core reasoning and action planning rely on external LLM APIs, and forgetting to set budget limits can result in runaway expenses from complex, looping autonomous tasks.

- Mismanaging Context: Allowing sessions to run indefinitely without a reset. This can lead to context exhaustion, where the LLM becomes confused or performs poorly due to a bloated, unmanaged short-term memory cache.

Related Tools

Frequently Asked Questions

Why use a self-hosted agent instead of a standard AI chatbot?

Clawdbot persists context across days and sessions, integrates directly with local system tools (files, apps, CLI), and can perform proactive tasks via scheduled automation, which centralized cloud-based services cannot do due to privacy and security constraints.

Is dedicated hardware necessary to run Clawdbot?

No. While dedicated hardware like a Mac Mini ensures consistent availability, the computational inference is offloaded via API keys to external LLM providers. A minimal cloud VPS is typically sufficient for running the Node.js runtime and maintaining 24/7 operation.

What are the primary security risks of running an autonomous agent with shell access?

The primary risk is the agent executing unintended or destructive commands on the host system. This is mitigated by running the agent in a sandboxed, non-root user environment and utilizing strict configuration allowlists to limit who can command the agent.

Can the agent continuously learn and improve its own capabilities?

Yes. By utilizing its shell access and filesystem manipulation capabilities, the agent can autonomously install new libraries, write wrapper scripts to create new tools, and update its own internal documentation, achieving a state of recursive self-improvement.

Key Terms

- Agentic AI

- An artificial intelligence system possessing the capacity to act upon its environment, execute multi-step plans, and utilize external tools, rather than merely responding conversationally.

- Sovereign Agent

- An AI assistant that is self-hosted on the user's own infrastructure (local machine or VPS), ensuring privacy, operational control, and local data persistence.

- Gateway Model

- The central Node.js orchestration layer that routes communication between the user’s messaging platforms (Transports) and the AI’s cognitive runtime, managing action execution.

- Shell Access (CLI)

- The ability of the AI agent to execute command-line interface commands directly on the host machine, enabling file management, software installation, and deep system interaction.

- Filesystem as Memory

- The architectural principle where the agent's long-term context, persona definition (SOUL.md), and user preferences are stored transparently in editable local text files rather than opaque databases.

- Transports

- The various messaging platforms (e.g., Telegram, WhatsApp, Discord) through which the user interacts with the agent, connected via persistent WebSocket or polling connections.